Table of Contents

Post-hoc Tests

Introduction

If you find any significant main effect or interaction with an ANOVA test, congrats, you have at least one significant difference in your data. However, an ANOVA test doesn't tell you where you have the differences. Thus, you need to do one more test–a post-hoc test–to find where they exist.

Comparisons for main effects

As we have seen in the Anova page, there are two kinds of effects you may have: main effects, and interactions (if your model is two-way or more). The comparison for the main effect is fairly straightforward, but there are four kinds of comparisons you can make, so you have to decide which one you want to do.

Before talking about the differences of the comparisons, you need to know one important thing. Some of the post-hoc comparisons may not be appropriate for repeated-measure ANOVA. I am not 100% sure about this point, but it seems that some of the methods do not consider the within-subject design. So, my current suggestion is that if the factor you are comparing in a post-hoc test is a within-subject factor, it might be safer to use a t test with the Bonferroni's or Sidak's correction.

OK., back to the discussion about the types of comparisons. For the discussion, let's say you have three groups: A, B, and C.

- [Case 1] Compare every possible combination of your groups: In this case, you compare everything. You will compare A and B, B and C, and C and A. But you also compare A and B+C (the mean of B and C), B and C+A, C and A+B.

- [Case 2] Compare every group: In this case, you will compare A and B, B and C, and C and A. But you do not compare A and B+C, B and C+A, C and A+B.

- [Case 3] Compare every group against the control: In this case, you will compare the groups only against the control condition. If your control condition is A, you will compare A and B, and A and C.

- [Case 4] Compare the data against the within-subject factor: In this case, you will make a comparison against the within-subject factor. In other words, if you have done repeated-measure ANOVA, this is the way to do a post-hoc test. The methods for this case can be used for tests against between-subject factors.

The strict definition of a post-hoc test means a test which does not require any plan for testing. In the four examples above, only Case 1 satisfies the strict definition of a post-hoc test because the others cases require some sort of planning on which groups to compare or not to compare. However, the term of a post-hoc test is often used for meaning Case 2 and Case 3. Case 4 is usually called a planned comparison test, but again it is often referred as a post-hoc test as well. In this wiki, I use a post-hoc test to mean all of these four tests.

The key point here is you need to figure out which case you want to do for the comparisons after ANOVA. Make sure what comparisons you are interested in before doing any test.

Comparisons for interactions

If you find a significant interaction, things are a little more complicated. This means that the effect of one factor depends on the conditions controlled by the other factors. So, what you need to do is to make comparisons against one factor with fixing the other factors. Let's say you have two Devices (Pen and Touch) and three Techniques (A, B, and C) as we have seen in the two-way ANOVA example. If you have found the interaction, what you need to look at is:

- The effect of Technique under Device = Pen;

- The effect of Technique under Device = Touch;

- The effect of Device under Technique = A;

- The effect of Device under Technique = B; and

- The effect of Device under Technique = C.

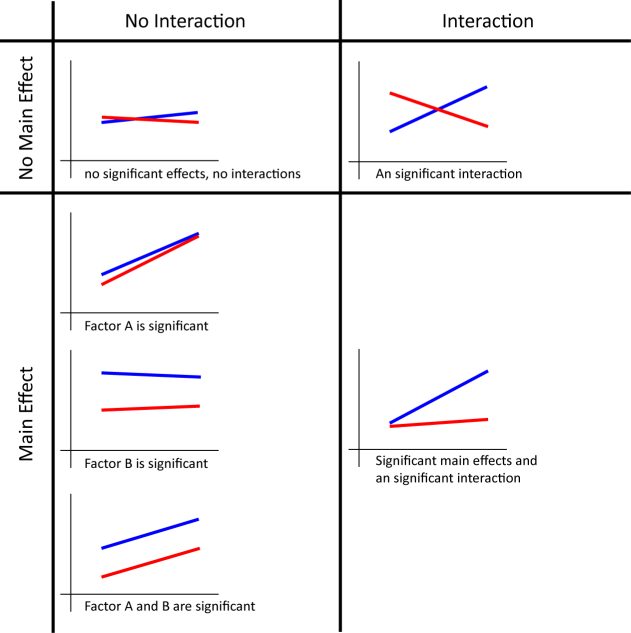

The effects we look at here are called simple main effects. Although you could report all the simple main effects you have, it might become lengthy. Rather, providing a graph like above is easier to understand what kinds of trends your data have. Your graph will look like one of the following graphs depending of the existence of main effects and interactions.

It is also said that you shouldn't report the main effects (not simple main effects) when you have a significant interaction. This is because significant differences are caused by some combination of the factors, and not necessarily caused by a single factor. However, I think that it is still good to report any significant main effects even if you have a significant interaction because it tells us what the data look like. Again, providing a graph is very helpful for your readers to understand your data.

The sad news is that it is not as easy in R to do post-hoc test as in SPSS. You often have to do a lot of things manually or some methods are not really supported in R. So, particularly if you are doing two-way ANOVA and you have an access to SPSS, it may be better to use SPSS.

Main effect: Comparing every possible combination of your groups (Scheffe's test)

Scheffe's test allows you to do a comparison on every possible combination of the group you are caring about. Unfortunately, there is no automatic way to do Scheffe's test in R. You have to do it manually. The following procedure is based on Colleen Moore & Mike Amato's material.

We use the same example for one-way ANOVA.

We have a significant effect of Group. So, we need to look into that effect more deeply. First, you have to define the combination of the groups for your comparison.

The each element of coeff intuitively means the weight for the later calculation. The positive values and negative values represent data to compare. In this case, the first element is 1, and the second element is -1. And the first, second, and third elements will represent Group A, B, and C, respectively. So, this coeff means we are going to compare Group A and Group B. The third element is 0, which means Group C will be ignored.

Another key point is the sum of the elements must be 0. Here are some other examples.

- If you have three groups (A, B, C), and you want to compare Group B and C, coeff = c(0, 1, -1).

- If you have three groups (A, B, C), and you want to compare Group A and the mean of Group B and C, coeff = c(2, -1, -1).

- If you have five groups (A, B, C, D, E), and you want to compare the mean of Group A and B, and the mean of Group C, D and E, coeff = c(2/3, 2/3, 2/3, -1, -1).

Let's come back to the example. Now we need to calculate the number of the samples and the mean for each group.

We then calculate some statistical values.

mspsi should be 7.5625 if you have followed the example correctly. Now we are going to calculate the F value. For this, we need to know the value for the mean square for the errors (or residuals). According to the result of the anova test, it is 0.9405 (Did you find it? Go back to the very first of this example and see the result of summary(aov)). We also need to know the degree of freedom of the factor we are caring about (in this example, Group), which is 2.

Finally, we can calculate the p value for this comparison (comparison between Group A and Group B). For the degrees of freedom, we can use the same ones for the anova test, which is 2 for the first DOF, 21 for the second DOF (Find them in the result of the anova test).

Thus, the difference between Group A and B is significant because p < 0.05.

So, if you really want to do all possible comparisons, you probably want to write some codes so that you can do a batch operation. I think it should be straightforward, but be careful about the design of the comparison (i.e., coeff).

I am not 100% sure, but Scheffe's test may not be appropriate for repeated-measure ANOVA. For repeated-measure ANOVA, you can do a pairwise comparison with the Bonferroni's or Sidak's correction.

Main effect: Comparing every group (Tukey's test)

This is probably the most common case in HCI research. You want to compare the two of the groups, but you are not interested in any combination of more than one group. One of the common methods for this case is Tukey's method. The way to do a Tukey's test in R depends on how you have done ANOVA.

First, let's take a look at how to do a Tukey's test with the aov() function. We use the same example of one-way ANOVA.

Now, we do a Tukey's test.

So, we have significant differences between A and B, and A and C.

If you use the Anova() function, the way to do a Tukey's test is a little different. Here, we use the same example of two-way ANOVA, but the values for Time were slightly changed (to make the interaction effect disappear).

With the anova test, you will find the main effects. So, you need to run a Tukey's test. You have to do the following thing to do a Tukey's test with the model created by lm(). You need to include multcomp package beforehand.

Then, you get the results.

So, we have significant differences between any of the two groups in both Device and Technique.

It seems both ways to do a Tukey's test can accommodate mildly unbalanced data (the number of the samples are different cross the groups, but not that different). The Tukey's test which can handle mildly unbalanced data is also called Tukey-Kramer's test. If you have largely unbalanced data, you can't do a Tukey's test nor even ANOVA, and instead you should do a non-parametric test.

Another thing you should need to know is that Tukey may not be appropriate as a post-hoc test for repeated-measure ANOVA. This is because Tukey assumes the independency of the data across the groups. You can actually run a Tukey's test even for repeated-measure ANOVA with the second method (you need to use lme() in nlme package to build a model instead of lm()). As far as I can tell, it seems ok to use a Tukey's test for repeated-measure data, but I am not 100% sure yet.

With some statistical software, you can also run a Tukey's test for an interaction term. Unfortunately, it seems that it is not possible to do so in R (as of July 2011). I think this makes sense because you can simply look at simple main effects if you have a significant interaction term. But if you know a way to run a Tukey's test for an interaction term, please let me know.

Main effect: Comparing every group against the control (Dunnett's test)

In this case, you have one group which can be called “the reference group”, and you want to compare the groups only against this reference group. One common test is a Dunnett's test, and fortunately the way to do a Dunnett's test is as easy as a Tukey's test.

Let's use an example of the one-way ANOVA test.

Now, you are going to do a Dunnett's test. You need to include multcomp package.

As you can see in the result, the Dunnett's test only did comparisons between Technique A and B, and between Technique A and C. In this method, the first group in the factor you are caring about is used as the reference case (in this example, Technique A). So, you need to design the data so that the data for the reference case are associated with the first group in the factor.

You may have also noticed that the p values become smaller than those in the results of a Tukey's test.

| Tukey | Dunnett | |

|---|---|---|

| A - B | 0.0257122 | 0.018472 |

| A - C | 0.0002167 | 0.000149 |

Generally, a Dunnett's test is more sensitive (has more powers to find a significant difference) than a Tukey's test. At a cost of this power, you must assume the reference case for the comparisons.

You can also use the model generated by aov() in the lm() function. Here is another example of a Dunnett's test for two-way ANOVA.

Now, you get the result.

Main effect: Comparing data against the within-subject factor (Bonferroni correction, Holm method)

Another case you would commonly have is that you have within-subject factors. Unfortunately, in this case, the methods explained above may not be appropriate because they do not take the relationship between the groups into account. Instead, you can do a paired t test with some corrections to avoid some troubles caused by doing multiple t tests. The major corrections are Bonferroni correction and Holm method. Fortunately, you can do both quite easily in R. Let's take a look at the example of one-way repeated-measure ANOVA.

Doing multiple t tests with Bonferroni correction is as follows.

If you want to use Holm method, you just need to change the value of p.adj (or remove the argument of p.adj completely. R uses Holm method by default).

Other kinds of corrections are also available (type ?p.adjust.methods in the R console). But I think these two are usually good enough. It is considered that you should avoid using Bonferroni correction when you have more than 4 groups to compare. This is because Bonferroni correction becomes too strict for those cases.

You can also use the methods above for between-subject factors instead of using other methods (like Tukey's test). In this case, you just need to do something like:

So, which one to use?

There are several other things which may help you choose a particular method in addition to the properties explained above.

- A Dunnet's test has a stronger power (more likely to find a significant difference) than other methods. (But you need to assume the reference case).

- A Tukey's test (or Tukey-Kramer's test) is more strict or conservative (less likely to find a significant difference) than the pairwise comparison with the Bonferroni correction when the number of the groups to compare is small. But when the number of the groups to compare is large, a Tukey's test becomes less strict than the pairwise comparison with the Bonferroni correction.

- The pairwise comparison with the Bonferroni correction often becomes too strict when the number of the groups to compare is large. Generally, when the number of the groups is more than six, we want to avoid using this.

- A Scheffe's test tends to be strict because it compares all possible combination.

In HCI research, the most common method I have seen is either a Tukey's test or pairwise comparison with the Bonferroni correction. So, if you don't have any particular comparison in mind, these two would be the first thing you may want to try.

Interaction

If you have any significant interaction, you need to look at the data more carefully because more than 0ne factor contribute to significant differences. There are two ways to look into the data: simple main effects and interaction contrasts.

Simple main effect

The idea of the simple main effect is very intuitive. If you have n factors, you pick up one of them, fix it, and do a test for the n-1 factors. In our two-way ANOVA example, there are five tests for looking at simple main effects:

- A comparison against Technique for the data with Device = Pen;

- A comparison against Technique for the data with Device = Touch;

- A comparison against Device for the data with Technique = A;

- A comparison against Device for the data with Technique = B; and

- A comparison against Device for the data with Technique = C.

Let's do it with the example of two-way ANOVA.

This ANOVA test shows you have a significant interaction, so now we are going to look at the simple main effects. First, we are going to look at the difference caused by Technique for the Pen condition. For this, we pick up data only for the Pen condition.

We can then simply run a one-way ANOVA test against Technique.

So, we have a significant simple effect here. We can do tests for other simple main effects in a similar way.

If you only have two groups to compare, you can just do a t test as you can see above. In this example, you will find significant simple effects in all the five tests. But if the main effects are so powerful, we cannot find clear interactions of the factors with tests for simple main effects. In such cases, we need to switch to doing tests for interaction contrasts.

Interaction Contrast

Interaction contrasts basically mean you compare every combination of the two groups. So it seems kind of similar to Scheffe's test. I haven't figured out how to do interaction contrasts in R. I will add it when I figure out.

Do I really need to run ANOVA?

Some of you may have noticed that some post-hoc test methods explained here do not use any result gained from aov() or Anova() function. For example. post-hoc tests with Bonferroni or Holm correction do not use the results of the ANOVA test.

In theory, if you use post-hoc methods which do not require the result of the ANOVA test, you don't need to run ANOVA, and can directly run post-hoc methods. However, running ANOVA and then running post-hoc tests is kind of a de-facto standard, and most of the readers expect the results of ANOVA (and the results of post-hoc tests in the case you find any statistical difference through the ANOVA test). Thus, in order to avoid the confusion that the reader might have, it is probably better to run ANOVA regardless of whether it is theoretically needed.

How to report

In the report, you need to describe which post-hoc test you used and where you found the significant differences. Let's say you are using a Tukey's test and have the results like this.

Then, you should report as follows:

A Tukey's pairwise comparison revealed the significant differences between A and B (p < 0.05), and between A and C (p < 0.01).

Of course, you need to report the results of your ANOVA test before this.

Discussion

"covariate interactions found -- default contrast might be inappropriate"

Please help me to solve below mention equation for dunnett test.

I know how calculate q as per below mention formula.

q= Mean1-Mean2 / SEDifference

But I could not understand how to calculate adjusted p value as per below mention formula.

pValue(adjusted) = PFromQDunnett(q,DF,M).

Please provide example if possible or reference for the same.

Is there a way to run Scheffe test in R with the model from Anova function (in 'car' package)?

thank you for the great tutorial!

I have a one question:

which post-hoc test should be used in case of a mixed-design?

I have one between-subjects factor (2 levels) and one within-subjects factor (6 levels) and Anova showed significance of both main effects as well as of the interaction. Which post-hoc test should I run now?

Hana

<a href=http://jakirower.co.pl>opinie o Kross level</a>

http://www.alpassocoitempi.it/651-ray-ban-vista-uomo-indossati.htm

http://www.io-riciclo.it/374-scarpe-nike-air-force-nuove

http://www.clinicaviaemilia.it/nike-free-run-2-753

http://www.succesfou.fr/casquette-miami-heat-foot-locker-662.html

http://www.caminowatch.fr/gazelle-adidas-homme-120.html

<a href=http://www.chaletinterclubmontventoux.fr/234-air-max-2016-blanche-femme-pas-cher.php>Air Max 2016 Blanche Femme Pas Cher</a>

<a href=http://www.biotoxen.it/311-cappelli-nike-visiera-piatta.php>Cappelli Nike Visiera Piatta</a>

<a href=http://www.tissages-de-gravigny.fr/nike-thea-femme-blanche.html>Nike Thea Femme Blanche</a>

<a href=http://www.casevacanzesottoalduomo.it/nike-air-max-90-blu-470.php>Nike Air Max 90 Blu</a>

<a href=http://www.faustoparavidino.it/oakley-pitchman.aspx>Oakley Pitchman</a>

http://www.animalcare.fr/stan-smith-effet-miroir-842.html

http://www.lucilepeuch.fr/nike-huarache-point-de-vente-920.php

http://www.modeprice.fr/537-sac-longchamps-kate-moss.php

http://www.casevacanzesottoalduomo.it/nike-air-max-90-bianche-amazon-016.php

http://www.semioticamente.it/nike-sb-zoom-air-paul-rodriguez-2

<a href=http://www.hotelcentrevalleebleue.fr/404-new-balance-u420-bleu-et-rouge.html>New Balance U420 Bleu Et Rouge</a>

<a href=http://www.les-amis-de-nicolas-sarkozy.fr/931-nike-sb-janoski-lunar-pas-cher.php>Nike Sb Janoski Lunar Pas Cher</a>

<a href=http://www.tissages-de-gravigny.fr/air-max-thea-grise-et-orange.html>Air Max Thea Grise Et Orange</a>

<a href=http://www.turismolastminute.it/334-vans-bordeaux-indossate.html>Vans Bordeaux Indossate</a>

<a href=http://www.biotoxen.it/032-cappello-adidas-visiera-curva.php>Cappello Adidas Visiera Curva</a>

http://www.autoescuelaalcon.es/

http://www.mantenimientodejardines.com.es/

http://www.costa-anatomicas.es/

http://www.pintorespontevedra.es/

http://www.pharmaceutical-care.es/

<a href=http://www.terrazasdemadera.es/>levitra sin receta</a>

<a href=http://www.mantenimientodejardines.com.es/>levitra original</a>

<a href=http://www.planie.es/>cialis sin receta</a>

<a href=http://www.limpiezasyserviciosterres.es/>kamagra gel</a>

<a href=http://www.pintorespontevedra.es/>kamagra opiniones</a>

http://www.floating-studio-flats.co.uk/985-air-max-thea-blue-lagoon.html

http://www.mmua.co.uk/867-nike-cortez-white-velvet-brown.html

http://www.southportsuperbikeshop.co.uk/374-nike-air-max-95-dyn.html

http://www.cars-wrapping.co.uk/719-nike-free-rn-flyknit-motion.html

http://www.cars-wrapping.co.uk/013-nike-free-flyknit-5.0-men.html

<a href=http://www.jlapressureulcerpartnership.co.uk/reebok-khaki-classic-077.htm>Reebok Khaki Classic</a>

<a href=http://www.attention-deficit-disorder.co.uk/nike-air-max-2016-release-768.html>Nike Air Max 2016 Release</a>

<a href=http://www.marrandmacgregor.co.uk/159-adidas-yeezy-boost-size-6.html>Adidas Yeezy Boost Size 6</a>

<a href=http://www.decorator-norwich.co.uk/554-nike-free-4.0-black-and-white>Nike Free 4.0 Black And White</a>

<a href=http://www.ofpeopleandplants.co.uk/nike-air-max-2015-review-runners-world-479.html>Nike Air Max 2015 Review Runner's World</a>

http://offer.moscow/

http://www.cars-wrapping.co.uk/988-nike-free-run-flyknit-4.0-womens-running-shoes.html

http://www.southportsuperbikeshop.co.uk/473-nike-air-max-95-hiroshi.html

http://www.evoslimmingcoupon.co.uk/air-jordan-black-and-purple-359.php

http://www.giantfang.co.uk/air-max-90-woven-vachetta-tan-144

http://www.giantfang.co.uk/nike-huarache-ultra-breathe-mens-354

<a href=http://www.mobiledeals4contractphones.co.uk/oakley-sunglasses-cheap-on-facebook-213.html>Oakley Sunglasses Cheap On Facebook</a>

<a href=http://www.bike-courier.co.uk/nike-flyknit-racer-blackout-on-feet-867.html>Nike Flyknit Racer Blackout On Feet</a>

<a href=http://www.ukfinanceguide.co.uk/994-nike-air-max-one-ultra-moire.html>Nike Air Max One Ultra Moire</a>

<a href=http://www.usapokergame.co.uk/127-adidas-ultra-boost-black-triple.html>Adidas Ultra Boost Black Triple</a>

<a href=http://www.offerzone.co.uk/418-converse-womens-uk.htm>Converse Womens Uk</a>

http://www.12deliver.nl/594-saucony-triumph-iso-2-dames.php

http://www.deermedia.de/hollister-weste-grau

http://www.hannover-cms.de/947-abercrombie--fitch-pullover-classic-crew

http://www.gasthofbahra.de/ray-ban-sonnenbrille-damen-869.html

http://www.12deliver.nl/715-saucony-triumph-iso-2-runners-world.php

<a href=http://www.zorgboerderijdaglicht.nl/653-free-running.htm>Free Running</a>

<a href=http://www.srbijaleverkusen.de/nike-free-damen-türkis-schwarz-684.php>Nike Free Damen Türkis Schwarz</a>

<a href=http://www.karmacosmic.de/859-stefan-janoski-damen-schuhe.html>Stefan Janoski Damen Schuhe</a>

<a href=http://www.fsv-friends.nl/502-air-max-thea-premium.html>Air Max Thea Premium</a>

<a href=http://www.pietjebelldemusical.nl/494-adidas-schoenen-wit-met-goud.html>Adidas Schoenen Wit Met Goud</a>

http://www.ofpeopleandplants.co.uk/nike-air-max-2015-mens-colorways-779.html

http://www.floating-studio-flats.co.uk/042-air-max-thea-outfit.html

http://www.lanarkunitedfc.co.uk/997-nike-air-max-90-iron-metallic-red-bronze-leather

http://www.pro-trak.co.uk/saucony-hurricane-15-538.htm

http://www.mutantsoftware.co.uk/adidas-originals-london-trainers-487.php

<a href=http://www.simplisecurity.co.uk/puma-creepers-rihanna-red-639.html>Puma Creepers Rihanna Red</a>

<a href=http://www.youthopinionsunite.co.uk/adidas-gazelle-og-trainers-290.php>Adidas Gazelle Og Trainers</a>

<a href=http://www.marrandmacgregor.co.uk/504-adidas-yeezy-boost-350-pirate-black-2.0.html>Adidas Yeezy Boost 350 Pirate Black 2.0</a>

<a href=http://www.floating-studio-flats.co.uk/553-air-max-shoes-for-men-2015.html>Air Max Shoes For Men 2015</a>

<a href=http://www.giantfang.co.uk/air-max-90-white-on-feet-954>Air Max 90 White On Feet</a>

http://www.modern-course.de/311-nike-air-max-thea-weiß-bestellen.php

http://www.ausbildung-in-pflegeberufen.de/nike-air-max-command-damen-blau-685.html

http://www.frauenuni-dresden.de/nike-free-5.0-schwarz-herren-günstig-010.html

http://www.ulviks.nl/303-cortez-nike-dames.html

http://www.ikbenalles.nl/849-oakley-zonnebril-op-sterkte-prijs.php

<a href=http://www.savanna-interior-shop.nl/839-golden-state-warriors-new-era.html>Golden State Warriors New Era</a>

<a href=http://www.avarusmedia.de/oakley-holbrook-polarized-gläser-314.php>Oakley Holbrook Polarized Gläser</a>

<a href=http://www.nikeflyknitkaufen.de/nike-free-4.0-flyknit-damen-schwarz-793>Nike Free 4.0 Flyknit Damen Schwarz</a>

<a href=http://www.leserlichundhoerich.de/nike-air-max-1-gs-510.php>Nike Air Max 1 Gs</a>

<a href=http://www.wvaegir.nl/adidas-superstar-dames-glitter-898.php>Adidas Superstar Dames Glitter</a>

http://www.anytekabel.de/854-nike-shox-kaufen.asp

http://www.hilfeplanverfahren.de/854-nike-air-force-weiß-niedrig.php

http://www.hipcatclub.de/vans-sk8-high-white-794.htm

http://www.gombosportal.de/733-louboutin-high-heels-rot.php

http://www.surfsapiens.nl/asics-dames-gel-dedicate-3-indoor.htm

<a href=http://www.400jaarveenkolonien.nl/longchamp-schoudertas>Longchamp Schoudertas</a>

<a href=http://www.avarusmedia.de/oakley-sonnenbrille-wechselgläser-330.php>Oakley Sonnenbrille Wechselgläser</a>

<a href=http://www.creativesweat.de/713-saucony-fastwitch-6-zalando.html>Saucony Fastwitch 6 Zalando</a>

<a href=http://www.vintagecadillacs.nl/100-air-max-thea-maat-43.htm>Air Max Thea Maat 43</a>

<a href=http://www.consolidate-it.nl/166-nike-huarache-zwart-grijs>Nike Huarache Zwart Grijs</a>

http://www.evoslimmingcoupon.co.uk/air-jordan-grey-and-blue-978.php

http://www.frankluckham.co.uk/adidas-superstar-all-colours-592.php

http://www.accomlink.co.uk/adidas-gazelle-bluebird-747

http://www.northhampshireenterprise.co.uk/nike-janoski-obsidian-791.html

http://www.usapokergame.co.uk/102-adidas-ultra-boost-black-2.0.html

<a href=http://www.itsupportlondonbridge.co.uk/adidas-stan-smith-shoes-2016-001.asp>Adidas Stan Smith Shoes 2016</a>

<a href=http://www.bike-courier.co.uk/nike-shox-basketball-2004-714.html>Nike Shox Basketball 2004</a>

<a href=http://www.lanarkunitedfc.co.uk/726-nike-air-max-90-essential-atomic-red>Nike Air Max 90 Essential Atomic Red</a>

<a href=http://www.jlapressureulcerpartnership.co.uk/reebok-black-286.htm>Reebok Black</a>

<a href=http://www.tableoakfurnitureland.co.uk/nike-air-max-2017-shoes.html>Nike Air Max 2017 Shoes</a>

http://www.grupoitealbacete.es/255-puma-creepers-velvet-negras.html

http://www.luismesacastilla.es/756-nike-roshe-run-navy.php

http://www.rcaraumo.es/nike-blazer-high-228.html

http://www.younes.es/203-nike-air-max-zero-qs

http://www.adidasnmdcomprar.nu/adidas-nmd-oreo-635

<a href=http://www.luismesacastilla.es/095-nike-roshe-rojas-hombre.php>Nike Roshe Rojas Hombre</a>

<a href=http://www.el-codigo-promocional.es/055-reebok-nano-4.0.aspx>Reebok Nano 4.0</a>

<a href=http://www.sanjuandelamata.es/108-nike-free-rn-flyknit-zalando.html>Nike Free Rn Flyknit Zalando</a>

<a href=http://www.adidasnmdcomprar.nu/adidas-nmd-red-camo-257>Adidas Nmd Red Camo</a>

<a href=http://www.cdoviaplata.es/outlet-zapatos-mbt-en-barcelona-629.html>Outlet Zapatos Mbt En Barcelona</a>

http://www.younes.es/717-nike-air-max-one-ultra-moire

http://www.cdoviaplata.es/mbt-españa-compra-online-648.html

http://www.younes.es/731-zapatillas-air-max-2016-para-ni帽os

http://www.aspasi.es/150-saucony-running.htm

http://www.rcaraumo.es/stefan-janoski-max-l-qs-757.html

<a href=http://www.luismesacastilla.es/537-nike-roshe-precio.php>Nike Roshe Precio</a>

<a href=http://www.bristol.com.es/nike-air-force-one-españa-451.html>Nike Air Force One España</a>

<a href=http://www.younes.es/008-air-huarache>Air Huarache</a>

<a href=http://www.felipealonso.es/709-vans-sk8-hi-platform-comprar.html>Vans Sk8 Hi Platform Comprar</a>

<a href=http://www.younes.es/994-air-max-90-rojas>Air Max 90 Rojas</a>

http://www.giantfang.co.uk/nike-air-max-2015-blue-753

http://www.youthopinionsunite.co.uk/adidas-gazelle-canada-717.php

http://www.evoslimmingcoupon.co.uk/air-jordan-future-grey-black-486.php

http://www.howzituk.co.uk/644-nike-roshe-flyknit-blue-and-black.html

http://www.waterfallrainbows.co.uk/nike-air-presto-thunder-blue-105.php

<a href=http://www.backpackersholidays.co.uk/972-nike-air-force-1-low-blue-suede.html>Nike Air Force 1 Low Blue Suede</a>

<a href=http://www.hairextensionscity.co.uk/808-roshe-run-outfits-tumblr.html>Roshe Run Outfits Tumblr</a>

<a href=http://www.youthopinionsunite.co.uk/womens-adidas-gazelle-og-trainers-pink-512.php>Womens Adidas Gazelle Og Trainers Pink</a>

<a href=http://www.howzituk.co.uk/122-flyknit-racer-custom.html>Flyknit Racer Custom</a>

<a href=http://www.cyberville.co.uk/934-new-balance-574-fashion.htm>New Balance 574 Fashion</a>

http://www.younes.es/257-air-max-one-2014

http://www.dekodery.eu/nike-sb-stefan-janoski-para-mujer.html

http://www.luismesacastilla.es/631-nike-roshe-flyknit-baratas.php

http://www.adidasnmdcomprar.nu/adidas-nmd-dorados-489

http://www.batalladefloreslaredo.es/ferrari-oakley-521

<a href=http://www.rcaraumo.es/flyknit-nike-air-max-1-799.html>Flyknit Nike Air Max 1</a>

<a href=http://www.denisemilani.es/981-nike-huarache-2015.php>Nike Huarache 2015</a>

<a href=http://www.lubpsico.es/ultra-boost-adidas-white-782.html>Ultra Boost Adidas White</a>

<a href=http://www.sedar2013.es/gorras-phoenix-coyotes-740.php>Gorras Phoenix Coyotes</a>

<a href=http://www.colegio-sanfranciscojavier.es/699-zapatos-timberland-gris.html>Zapatos Timberland Gris</a>

http://www.mandala2012.co.uk/390-adidas-shoes-women-white-and-black.html

http://www.ofpeopleandplants.co.uk/nike-2015-air-max-white-582.html

http://www.mmua.co.uk/512-nike-cortez-mens.html

http://www.howzituk.co.uk/326-nike-flyknit-air-max-2016-grey.html

http://www.accomlink.co.uk/adidas-superstar-supercolor-red-womens-408

<a href=http://www.mobiledeals4contractphones.co.uk/oakley-holbrook-lenses-malaysia-583.html>Oakley Holbrook Lenses Malaysia</a>

<a href=http://www.jlapressureulcerpartnership.co.uk/reebok-classic-leather-womens-642.htm>Reebok Classic Leather Womens</a>

<a href=http://www.hairextensionscity.co.uk/270-nike-roshe-run-original.html>Nike Roshe Run Original</a>

<a href=http://www.misstilly.co.uk/nike-outlet-shop-online-uk-709.htm>Nike Outlet Shop Online Uk</a>

<a href=http://www.aranjackson.co.uk/timberland-deck-shoes-sale-631.php>Timberland Deck Shoes Sale</a>

http://www.lubpsico.es/adidas-stan-smith-colores-485.html

http://www.el-codigo-promocional.es/384-reebok-blancas-mujer.aspx

http://www.elregalofriki.es/ray-ban-erika-man-318.php

http://www.ibericarsalfer.es/nike-running-mujer-outlet-887.html

http://www.grupoitealbacete.es/984-puma-bmw-2016.html

<a href=http://www.rcaraumo.es/huarache-ultra-baratas-168.html>Huarache Ultra Baratas</a>

<a href=http://www.luismesacastilla.es/061-nike-roshe-run-grises-y-rosas.php>Nike Roshe Run Grises Y Rosas</a>

<a href=http://www.batalladefloreslaredo.es/oakley-nueva-coleccion-435>Oakley Nueva Coleccion</a>

<a href=http://www.aspasi.es/405-saucony-peregrine-6-opiniones.htm>Saucony Peregrine 6 Opiniones</a>

<a href=http://www.rcaraumo.es/nike-stefan-janoski-max-azules-042.html>Nike Stefan Janoski Max Azules</a>

http://www.rcaraumo.es/huarache-blancas-y-verdes-087.html

http://www.denisemilani.es/622-nike-95-air-max.php

http://www.rcaraumo.es/nike-cortez-en-chile-465.html

http://www.el-codigo-promocional.es/651-zapatos-reebok-clasicos-para-niños.aspx

http://www.ibericarsalfer.es/nike-hombre-amazon-874.html

<a href=http://www.tacadetinta.es/chalecos-abercrombie-para-hombre-precio>Chalecos Abercrombie Para Hombre Precio</a>

<a href=http://www.adidasnmdcomprar.nu/adidas-nmd-gray-454>Adidas Nmd Gray</a>

<a href=http://www.lubpsico.es/superstar-adidas-adicolor-869.html>Superstar Adidas Adicolor</a>

<a href=http://www.elregalofriki.es/como-identificar-gafas-de-sol-originales-ray-ban-588.php>Como Identificar Gafas De Sol Originales Ray Ban</a>

<a href=http://www.younes.es/328-nike-air-max-90-hyperfuse-black-and-white>Nike Air Max 90 Hyperfuse Black And White</a>

http://www.bike-courier.co.uk/nike-roshe-run-2-011.html

http://www.attention-deficit-disorder.co.uk/air-max-2016-red-980.html

http://www.floating-studio-flats.co.uk/413-air-max-95-greedy.html

http://www.lanarkunitedfc.co.uk/314-nike-air-max-90-ultra-breathe-womens-shoe

http://www.wandsworth-plumbing.co.uk/ray-ban-wayfarer-tortoise-331.htm

<a href=http://www.itsupportlondonbridge.co.uk/adidas-stan-smith-core-black-152.asp>Adidas Stan Smith Core Black</a>

<a href=http://www.howzituk.co.uk/698-nike-flyknit-blue-and-green.html>Nike Flyknit Blue And Green</a>

<a href=http://www.pro-trak.co.uk/saucony-cohesion-5-121.htm>Saucony Cohesion 5</a>

<a href=http://www.giantfang.co.uk/air-max-tavas-red-on-feet-233>Air Max Tavas Red On Feet</a>

<a href=http://www.lanarkunitedfc.co.uk/829-air-max-90-black>Air Max 90 Black</a>

http://www.floating-studio-flats.co.uk/488-air-max-sneakers-for-women.html

http://www.aranjackson.co.uk/timberland-earthkeepers-boat-shoe-196.php

http://www.northhampshireenterprise.co.uk/nike-janoski-max-all-white-304.html

http://www.waterfallrainbows.co.uk/nike-air-presto-fleece-549.php

http://www.cars-wrapping.co.uk/695-nike-free-rn-flyknit-id-review.html

<a href=http://www.ukbriberyact2010.co.uk/nike-free-5.0-2015-black-188.html>Nike Free 5.0 2015 Black</a>

<a href=http://www.accomlink.co.uk/superstar-adidas-gold-toe-829>Superstar Adidas Gold Toe</a>

<a href=http://www.mmua.co.uk/104-nike-cortez-trainers-blue.html>Nike Cortez Trainers Blue</a>

<a href=http://www.offerzone.co.uk/965-converse-size-6.5-uk.htm>Converse Size 6.5 Uk</a>

<a href=http://www.hairextensionscity.co.uk/999-nike-roshe-run-mens-galaxy.html>Nike Roshe Run Mens Galaxy</a>

http://www.ulviks.nl/408-nike-cortez-heren.html

http://www.avarusmedia.de/oakley-jupiter-squared-847.php

http://www.wvaegir.nl/adidas-superstar-blauw-maat-39-379.php

http://www.visrestaurantlepescadou.nl/509-stan-smith-rood-suede.html

http://www.kfz-haftpflichtversicherung-kuendigen.de/mbt-schuhe-kaufen-177.php

<a href=http://www.ojc-backstage.nl/049-converse-sneakers-leer-wit.php>Converse Sneakers Leer Wit</a>

<a href=http://www.zorgboerderijdaglicht.nl/884-nike-free-run-2-dames-kopen.htm>Nike Free Run 2 Dames Kopen</a>

<a href=http://www.ojc-backstage.nl/007-converse-maat-22.php>Converse Maat 22</a>

<a href=http://www.nikeflyknitkaufen.de/nike-free-flyknit-4.0-blau-072>Nike Free Flyknit 4.0 Blau</a>

<a href=http://www.erokerswebshop.nl/215-marktplaats-abercrombie-fitch.php>Marktplaats Abercrombie Fitch</a>

http://www.felipealonso.es/752-tienda-vans-baratas-madrid.html

http://www.aspasi.es/627-saucony-xodus-iso-test.htm

http://www.tacadetinta.es/vestidos-abercrombie-para-mujer

http://www.colegio-sanfranciscojavier.es/854-timberland-shoes-peru.html

http://www.bristol.com.es/zapatos-nike-force-one-2015-988.html

<a href=http://www.grupoitealbacete.es/661-puma-tenis-bmw.html>Puma Tenis Bmw</a>

<a href=http://www.cdoviaplata.es/comprar-mbt-españa-999.html>Comprar Mbt España</a>

<a href=http://www.sedar2013.es/gorras-chicago-bulls-2017-131.php>Gorras Chicago Bulls 2017</a>

<a href=http://www.sedar2013.es/gorras-memphis-grizzlies-259.php>Gorras Memphis Grizzlies</a>

<a href=http://www.elregalofriki.es/ray-ban-justin-rb4165-249.php>Ray Ban Justin Rb4165</a>

http://www.ljungkyrkan.nu/240-reebok-tenisky-panske.htm

http://www.cliftonrestaurant.co.uk/nike-shox-orange-and-grey-557.php

http://www.c-p-c.fr/998-adidas-yeezy-boost-350-v2.htm

http://www.super8-ilfilm.it/545-adidas-high-tops-amazon

http://www.koupelny-hed.cz/asics-obuv-na-volejbal.aspx

<a href=http://www.cliftonrestaurant.co.uk/nike-free-2.0-flyknit-570.php>Nike Free 2.0 Flyknit</a>

<a href=http://www.koupelny-hed.cz/converse-boty.aspx>Converse Boty</a>

<a href=http://www.musicalface.nl/625-adidas-rose-adizero-2.5.htm>Adidas Rose Adizero 2.5</a>

<a href=http://www.fiashosting.se/vita-reebok-easytone-156.php>Vita Reebok Easytone</a>

<a href=http://www.musicalface.nl/062-adidas-y3-pure-boost.htm>Adidas Y3 Pure Boost</a>

http://www.jonsvensdesign.se/468-nike-hypervenom-phantom-barn.php

http://www.vecchiaarena.it/nike-air-max-2016-prezzo-914.html

http://www.ljungkyrkan.nu/722-vans-tenisky-bordove.htm

http://www.laluna-rouen.fr/708-nike-flyknit-racer-oreo-1.0.html

http://www.super8-ilfilm.it/529-adidas-harden-dark-ops

<a href=http://www.whitneymcveigh.co.uk/nike-hyperlive-black-057.php>Nike Hyperlive Black</a>

<a href=http://www.freiberufler-netzwerk.de/653-adidas-tubular-radial-black-and-white.php>Adidas Tubular Radial Black And White</a>

<a href=http://www.herz-jesu-huellen.de/nike-cortez-schwarz-weiĂź-042.html>Nike Cortez Schwarz WeiĂź</a>

<a href=http://www.bliv-ergoterapeut.nu/tĂŞnis-timberland-gorge-c2---castanho-685.php>TĂŞnis Timberland Gorge C2 - Castanho</a>

<a href=http://www.trisportteam.cz/529-saucony-peregrine-6.html>Saucony Peregrine 6</a>

http://www.rcaraumo.es/stefan-janoski-max-granates-738.html

http://www.denisemilani.es/053-nike-air-max-90-hyp-premium-id-baratas.php

http://www.lubpsico.es/zapatos-adidas-de-hombre-2015-606.html

http://www.rcaraumo.es/huaraches-blancas-855.html

http://www.grupoitealbacete.es/206-puma-fenty-rihanna-creepers.html

<a href=http://www.colegio-sanfranciscojavier.es/940-timberlands-boots-colors.html>Timberlands Boots Colors</a>

<a href=http://www.felipealonso.es/097-vans-old-skool-rojas-mujer.html>Vans Old Skool Rojas Mujer</a>

<a href=http://www.sedar2013.es/atlanta-falcons-gorras-297.php>Atlanta Falcons Gorras</a>

<a href=http://www.aspasi.es/984-saucony-jazz-17-naranja.htm>Saucony Jazz 17 Naranja</a>

<a href=http://www.cdoviaplata.es/mbt-online-822.html>Mbt Online</a>

http://www.frbk.se/nhl-keps-flashback.html

http://www.alcius.es/adidas-nmd-xr1-558.html

http://www.pop-event.fr/nike-hypervenom-gris-353.php

http://www.tarvisioscacchi.it/282-adidas-superstar-bianche-e-nere-pitonate.html

http://www.slojdakademin.se/084-puma-skor-jr.php

<a href=http://www.laluna-rouen.fr/056-nike-air-max-90-essential-total-crimson.html>Nike Air Max 90 Essential Total Crimson</a>

<a href=http://www.jonsvensdesign.se/254-nike-air-force-low.php>Nike Air Force Low</a>

<a href=http://www.pop-event.fr/nike-kobe-xi-223.php>Nike Kobe Xi</a>

<a href=http://www.alcius.es/adidas-nmd-blancas-y-doradas-823.html>Adidas Nmd Blancas Y Doradas</a>

<a href=http://www.vecchiaarena.it/nike-silver-97-on-feet-291.html>Nike Silver 97 On Feet</a>

http://www.hollybushwitney.co.uk/345-adidas-basketball-shoes-gold-and-white.html

http://www.tarvisioscacchi.it/057-adidas-zx-flux-gold-and-black-prezzo.html

http://www.ljungkyrkan.nu/996-reebok-nano-6.0-heureka.htm

http://www.sposobynazdrowie.pl/638-puma-rihanna-creepers-sklep.php

http://www.kristiinakoskentola.nl/638-nike-shox-outlet.html

<a href=http://www.nocnsfsportconventie.nl/893-adidas-schoenen-dames.php>Adidas Schoenen Dames</a>

<a href=http://www.hollybushwitney.co.uk/002-adidas-y-3-crazy-explosive.html>Adidas Y-3 Crazy Explosive</a>

<a href=http://www.jonsvensdesign.se/368-nike-hypervenom-phantom-ag-pris.php>Nike Hypervenom Phantom Ag Pris</a>

<a href=http://www.c-p-c.fr/764-adidas-originals.htm>Adidas Originals</a>

<a href=http://www.programfurora.pl/215-converse-gorillaz.php>Converse Gorillaz</a>

</a>

<a href=http://datingice.com/>Dating Relationship Love

</a>

<a href=http://rhdating.com/>Best Online Dating Sites

</a>

<a href=http://sexdatingdelight.com/>Sex Dating Delight

</a>

http://adultdatingbrisbane.com/

http://datingice.com/

http://rhdating.com/

http://sexdatingdelight.com/

http://www.lessoinsdemariemassageenergetique.fr/169-chaussures-ralph-lauren-odie.php

http://www.thierryobadia.fr/025-palladium-blanche-femme.html

http://www.miolands-mode-video.fr/851-supra-skytop-or.php

http://www.lesdroles.fr/357-chaussure-lacoste-noir-et-blanche.php

http://www.forge-delours.fr/386-le-coq-sportif-arthur-ashe-noir.html

<a href=http://www.miolands-mode-video.fr/286-basket-supra-forum.php>Basket Supra Forum</a>

<a href=http://www.forge-delours.fr/771-le-coq-sportif-lcs-r900-w-glitter.html>Le Coq Sportif Lcs R900 W Glitter</a>

<a href=http://www.minitrain.fr/256-fila-shoes-2017.htm>Fila Shoes 2017</a>

<a href=http://www.messengercity.fr/052-mizuno-wave-ultima-soldes.php>Mizuno Wave Ultima Soldes</a>

<a href=http://www.miolands-mode-video.fr/058-chaussure-supra-femme.php>Chaussure Supra Femme</a>

http://www.miolands-mode-video.fr/293-supra-skytop-3-avis.php

http://www.forge-delours.fr/571-coq-sportif-bebe.html

http://www.dany-multi-services.fr/908-under-armour-basket-curry.php

http://www.barreau-de-saint-pierre.fr/090-salomon-speedcross-3-femme.php

http://www.lesdroles.fr/298-chaussure-lacoste-femme-rouge.php

<a href=http://www.messengercity.fr/366-mizuno-wave-prophecy-4-femme.php>Mizuno Wave Prophecy 4 Femme</a>

<a href=http://www.forge-delours.fr/555-coq-sportif-nouvelle-collection.html>Coq Sportif Nouvelle Collection</a>

<a href=http://www.miolands-mode-video.fr/132-mbt-geneve.php>Mbt Geneve</a>

<a href=http://www.barreau-de-saint-pierre.fr/463-chaussures-salomon-homme-gore-tex.php>Chaussures Salomon Homme Gore Tex</a>

<a href=http://www.messengercity.fr/502-basket-mizuno.php>Basket Mizuno</a>

http://www.hardsoftinformatica.it/skechers-memory-foam-uomo-zalando-145.php

http://www.fashiontour.it/899-scarpe-gucci-estate-2017.php

http://www.icamsrl.it/scarpe-tacco-12-nere-800.htm

http://www.gianly.it/194-mizuno-wave-rider-20-uomo.asp

http://www.gli-gnomi.it/scarpe-calcio-puma-due-colori-605.php

<a href=http://www.hardsoftinformatica.it/scarpe-mbt-offerte-online-477.php>Scarpe Mbt Offerte Online</a>

<a href=http://www.handballestense.it/supra-skytop-4-602.htm>Supra Skytop 4</a>

<a href=http://www.gianly.it/909-salomon-running.asp>Salomon Running</a>

<a href=http://www.iccmornago.it/calzature-alexander-mcqueen-629.htm>Calzature Alexander Mcqueen</a>

<a href=http://www.gianly.it/815-scarpe-mizuno-volley-2017.asp>Scarpe Mizuno Volley 2017</a>

http://www.maurogiulianini.it/647-negozio-tods-uomo-roma.htm

http://www.nokeys.it/449-immagini-scarpe-da-calcio-puma.php

http://www.metrocampania.it/skechers-uomo-running-861.html

http://www.laviadiemmaus.it/scarpe-louboutin-prezzi-yahoo-612.htm

http://www.maurogiulianini.it/224-scarpe-polo-ralph-lauren-hanford-mid.htm

<a href=http://www.libeativoli.it/sito-ufficiale-giuseppe-zanotti-996.html>Sito Ufficiale Giuseppe Zanotti</a>

<a href=http://www.monitortft.it/giuseppe-zanotti-scarpe-napoli-721.htm>Giuseppe Zanotti Scarpe Napoli</a>

<a href=http://www.netfraternity.it/198-under-armour-shoes.asp>Under Armour Shoes</a>

<a href=http://www.lombardoeditore.it/vibram-bikila-evo-465.html>Vibram Bikila Evo</a>

<a href=http://www.maurogiulianini.it/074-mocassini-tods-scontati.htm>Mocassini Tod's Scontati</a>

http://www.tibinet.it/scarpe-armani-nere-194.asp

http://www.villarianna.it/scarpe-tacchi-altissimi-online-203.asp

http://www.stonefree.it/336-sneakers-dolce-e-gabbana.htm

http://www.veneto-arte.it/mizuno-volleyball-289.php

http://www.stonefree.it/216-burberry-scarpe-uomo.htm

<a href=http://www.skydivepalermo.it/vibram-fivefingers-negozi-milano-916.asp>Vibram Fivefingers Negozi Milano</a>

<a href=http://www.tibinet.it/armani-scarpe-donne-379.asp>Armani Scarpe Donne</a>

<a href=http://www.stonefree.it/882-scarpe-armani-donne-2016.htm>Scarpe Armani Donne 2016</a>

<a href=http://www.skunky.it/230-hogan-grigie-interactive.php>Hogan Grigie Interactive</a>

<a href=http://www.villaprati.it/adidas-ace-16.1-nere-564.htm>Adidas Ace 16.1 Nere</a>

http://www.mozartfest.it/443-nike-di-tela-grigie.php

http://www.maquillajeypeluquerianovias.es/tenis-adidas-ultra-boost-feminino-501.html

http://www.maquillajeypeluquerianovias.es/zapatillas-adidas-mujer-deportivas-2015-640.html

http://www.mozartfest.it/973-jordan-raptor.php

http://www.maquillajeypeluquerianovias.es/adidas-rose-3-016.html

<a href=http://www.oceansurf.es/nike-mercurial-vapor-x-fg-cr7-111.php>Nike Mercurial Vapor X Fg Cr7</a>

<a href=http://www.maquillajeypeluquerianovias.es/adidas-pure-boost-x-women-562.html>Adidas Pure Boost X Women</a>

<a href=http://www.myranch.es/899-jeremy-scott-zapatillas.php>Jeremy Scott Zapatillas</a>

<a href=http://www.omnia-equip-auto.fr/539-air-force-fluo.html>Air Force Fluo</a>

<a href=http://www.myranch.es/361-boost-adidas-basketball.php>Boost Adidas Basketball</a>

http://www.deadlikeme.it/874-scarpe-tacco-inverno.htm

http://www.handballestense.it/scarpe-palladium-milano-852.htm

http://www.gli-gnomi.it/scarpe-da-calcio-con-calzino-senza-lacci-441.php

http://www.deadlikeme.it/080-scarpe-tacco-grosso-2017.htm

http://www.deadlikeme.it/479-scarpe-tacco-alto-da-uomo.htm

<a href=http://www.confartigianatomirano.it/manolo-blahnik-prezzi-789.htm>Manolo Blahnik Prezzi</a>

<a href=http://www.fashiontour.it/524-gucci-scarpe-uomo-usate.php>Gucci Scarpe Uomo Usate</a>

<a href=http://www.gli-gnomi.it/scarpe-da-calcio-per-bambini-di-8-anni-184.php>Scarpe Da Calcio Per Bambini Di 8 Anni</a>

<a href=http://www.gianly.it/729-mizuno-rider-19-osaka.asp>Mizuno Rider 19 Osaka</a>

<a href=http://www.gianly.it/439-mizuno-volley-alte.asp>Mizuno Volley Alte</a>

<a href=" http://achetersildenafilenligne.com/ ">acheter viagra 100mg</a>

<a href=" http://acheterviagraenlignelivraison24h.com/ ">acheter viagra en ligne livraison 24h </a>

<a href=" http://achatviagraenpharmacieenfrance.com/ ">vente de viagra en pharmacie en france</a>

<a href=" http://sildenafilpascherenfrance.com/ ">viagra pas cher </a>

<a href=" http://viagrapascherlivraisonrapide.com/ ">viagra pas cher </a>

<a href=" http://sildenafilpfizer50mgprix.com/ ">sildenafil pfizer 50 mg preis </a>

<a href=" http://viagraenventelibreenfrance.com/ ">viagra en vente libre en france </a>

<a href=" http://xn--viagragnriquelivresous48h-hicb.com/ ">viagra gГ©nГ©rique belgique </a>

<a href=" http://xn--cialisgnriquepascher-h2bb.com/ ">cialis gГ©nГ©rique pas cher </a>

<a href=" http://cialissansordonnanceenfrance.com/ ">cialis sans ordonnance en pharmacie </a>

<a href=" http://achetercialisenfrancesitefiable.com/ ">acheter cialis en france livraison rapide </a>

<a href=" http://achetercialissansordonnanceenpharmacie.com/ ">comment acheter cialis sans ordonnance </a>

<a href=" http://achetercialis20mgenligne.com/ ">achat cialis 20mg en ligne </a>

<a href=" http://achatcialisenfrancelivraisonrapide.com ">acheter cialis generique en france </a>

<a href=" http://achatcialis5mgenligne.com/ ">acheter cialis 5mg pas cher </a>

<a href=" http://tadalafil20mgpaschereninde.com/ ">acheter cialis 20mg pas cher </a>

<a href=" http://achetertadalafil20mgpascher.com/ ">achat cialis 20mg en ligne </a>

http://www.anonimoitaliano.it/232-nike-hypervenom-phantom-3.htm

http://www.angelocomisso.it/302-nike-hypervenom-2016.html

http://www.ascdiromagna.it/045-mbt-scarpe-vendita-on-line.htm

http://www.clubdelcosto.it/179-stivali-bassi-giuseppe-zanotti.asp

http://www.clubdelcosto.it/087-sandali-flat-valentino.asp

<a href=http://www.ascdiromagna.it/764-skechers-memory-foam-solette.htm>Skechers Memory Foam Solette</a>

<a href=http://www.angelocomisso.it/530-nike-mercurial-vapor-x-2016.html>Nike Mercurial Vapor X 2016</a>

<a href=http://www.al-parco.it/551-nike-magista-2016.html>Nike Magista 2016</a>

<a href=http://www.biellaintraprendere.it/mizuno-ultima-8-opinioni-214.html>Mizuno Ultima 8 Opinioni</a>

<a href=http://www.calzaturificiorenata.it/gucci-sneaker-171.htm>Gucci Sneaker</a>

<a href=" http://achetertadalafilsansordonnance.com/ ">achat cialis sans ordonnance </a>

<a href=" http://achattadalafilenfranceenpharmacie.com/ ">acheter tadalafil en france </a>

<a href=" http://acheterprednisone20mgenligne.com/ ">acheter prednisone 20 mg en ligne </a>

<a href=" http://acheterpropeciasurinternet.com/ ">acheter propecia sur internet </a>

<a href=" http://achatpropeciaparcartebancaire.com/ ">achat propecia partners </a>

<a href=" http://achatamoxicillinebiogaran1g.com/ ">amoxicilline 1g achat en ligne </a>

<a href=" http://acheterclomid100mgsurinternetpascher.com/ ">acheter clomid 100mg sur internet pas cher </a>

<a href=" http://acheterventolinespraysansordonnance.com/ ">acheter ventolin - acheter ventolin: </a>

<a href=" http://achatventolinesansordonnance.com/ ">achat ventoline sur internet </a>

<a href=" http://howtogetviagrawithoutadoctorprescription.us/ ">how to get viagra without going to a doctor </a>

<a href=" http://acheterzithromaxsurinternetenfrance.club/ ">acheter zithromax monodose sans ordonnance </a>

Make an effort to shed weight. Apnea is exacerbated and often brought on by excessive weight. Consider losing ample weight to move your BMI from over weight to merely overweight or even wholesome. Folks who suffer from lost excess weight have improved their apnea signs and symptoms, plus some have even treated their obstructive sleep apnea totally.

þÿ

You may not think so, but design is around trying to keep a wide open brain and enabling yourself to determine more of who you are. There are many useful sources to assist you to read more about fashion. Keep in mind the tips you've study right here as you may operate your way in the direction of greater design.Very Successful Plumbing Recommendations That Work Nicely

<a href=https://www.nocnsfsportconventie.nl/images/nocnsfsportcon/1643-adidas-schoenen-heren-nieuwe.jpg>Adidas Schoenen Heren Nieuwe</a>

þÿ

That will help you cope with ringing in the ears you need to prevent demanding conditions. Long periods of tension can make the tinnitus noises a lot louder compared to what they could be if you are in peaceful state. In order to support handle your ringing in the ears and not allow it to be even worse, you should attempt and live your life using the minimum quantity of pressure.

<img>https://www.berwynmountainpress.co.uk/images/img-ber/871-adidas-los-angeles-white-women.jpg</img>

Comprehending the sources of a panic attack can certainly produce a new strategy to strategy them. As soon as a person knows the sparks that kindle their panic attacks, they may be much better equipped to handle or avoid the attacks altogether. You can use this content under to discover ways to steer clear of your anxiety and panic attacks too.

<a href=https://www.alcius.es/images/alcius/3069-adidas-ultra-boost-negras-mujer.jpg>Adidas Ultra Boost Negras Mujer</a>

<img>https://www.freiberufler-netzwerk.de/images/img/4688-adidas-zx-flux-rose-gold.jpg</img>

http://www.ilmondodicrepax.it/487-adidas-ace-rosse.htm

http://www.ipssarcascino.it/818-alexander-mcqueen-tacchi.aspx

http://www.istitutocomprensivospezzanosila.it/golden-goose-bambina-rosa-291.htm

http://www.icamsrl.it/scarpe-tacco-aperte-771.htm

http://www.laprealpinagiorn.it/578-adidas-messi-16-pureagility.htm

<a href=http://www.istitutocomprensivospezzanosila.it/golden-goose-glitter-argento-859.htm>Golden Goose Glitter Argento</a>

<a href=http://www.ipssarcascino.it/841-scarpe-jimmy-choo-swarovski.aspx>Scarpe Jimmy Choo Swarovski</a>

<a href=http://www.hardsoftinformatica.it/negozi-di-scarpe-mbt-torino-362.php>Negozi Di Scarpe Mbt Torino</a>

<a href=http://www.laprealpinagiorn.it/217-adidas-x-16.1-sg.htm>Adidas X 16.1 Sg</a>

<a href=http://www.ilmondodicrepax.it/650-adidas-x16.2.htm>Adidas X16.2</a>

http://www.handballestense.it/scarpe-fila-a-roma-265.htm

http://www.handballestense.it/scarpe-under-armour-offerta-245.htm

http://www.florenceinn.it/998-scarpe-chanel-2016.htm

http://www.gli-gnomi.it/scarpe-da-calcio-senza-lacci-per-bambini-746.php

http://www.deadlikeme.it/451-scarpe-tacco-grosso-estive.htm

<a href=http://www.florenceinn.it/439-scarpe-armani-uomo-outlet.htm>Scarpe Armani Uomo Outlet</a>

<a href=http://www.handballestense.it/supra-scarpe-femminili-prezzo-128.htm>Supra Scarpe Femminili Prezzo</a>

<a href=http://www.deadlikeme.it/606-scarpe-tacco-grosso-invernali.htm>Scarpe Tacco Grosso Invernali</a>

<a href=http://www.francomarras.it/clarks-abbinamenti-528.htm>Clarks Abbinamenti</a>

<a href=http://www.gli-gnomi.it/scarpe-calcio-puma-powercat-1.10-927.php>Scarpe Calcio Puma Powercat 1.10</a>

http://www.clubdelcosto.it/063-scarpe-giuseppe-zanotti-usate.asp

http://www.angelocomisso.it/849-nike-mercurial-limited-edition.html

http://www.battagliamontecassino.it/jimmy-choo-fucsia-307.html

http://www.calzaturificiorenata.it/sneakers-alte-louis-vuitton-uomo-724.htm

http://www.calzaturificiorenata.it/scarpe-gucci-sportive-uomo-471.htm

<a href=http://www.biellaintraprendere.it/mizuno-wave-rider-20-amazon-431.html>Mizuno Wave Rider 20 Amazon</a>

<a href=http://www.anonimoitaliano.it/632-nike-magista-nere.htm>Nike Magista Nere</a>

<a href=http://www.battagliamontecassino.it/jimmy-choo-emily-737.html>Jimmy Choo Emily</a>

<a href=http://www.biellaintraprendere.it/mizuno-tennis-shoes-834.html>Mizuno Tennis Shoes</a>

<a href=http://www.biellaintraprendere.it/mizuno-scarpe-152.html>Mizuno Scarpe</a>

http://www.traiteur-janot.fr/067-nike-kaishi-2.0.html

http://www.usocalcio.it/vans-invernali-uomo-861.php

http://www.triwatt.fr/nike-orange-blanche-971.php

http://www.zorgcentrumdewissel.nl/nike-runner-2-645.php

http://www.volumefnucci.it/reebok-bianche-e-rosa-306.asp

<a href=http://www.decoriblearagusa.it/nike-thea-womens-085.php>Nike Thea Womens</a>

<a href=http://www.triwatt.fr/nike-free-ace-leather-264.php>Nike Free Ace Leather</a>

<a href=http://www.traiteur-janot.fr/146-free-run-2-femme-noir.html>Free Run 2 Femme Noir</a>

<a href=http://www.weltgang.de/935-puma-schuhe-rot-schwarz.htm>Puma Schuhe Rot Schwarz</a>

<a href=http://www.song-net.fr/959-air-force-1-noir-et-blanche.html>Air Force 1 Noir Et Blanche</a>

http://www.ace-renov.fr/916-asics-running-homme-noir.php

http://www.dresden2020.de/442-nike-cortez-damen-leder.php

http://www.extreme-hosting.co.uk/529-nike-air-max-90-ultra-breathe-trainers.php

http://www.dresden2020.de/857-nike-blazer-weiß.php

http://www.aurelieadomicile.fr/077-puma-chaussure-homme-2017-ducati.php

<a href=http://www.campingcarsonway.fr/039-yeezy-350-kanye-west.html>Yeezy 350 Kanye West</a>

<a href=http://www.extreme-hosting.co.uk/592-nike-blazer-black-leather.php>Nike Blazer Black Leather</a>

<a href=http://www.aurelieadomicile.fr/399-chaussures-puma-noir.php>Chaussures Puma Noir</a>

<a href=http://www.extreme-hosting.co.uk/589-nike-racer-flyknit-2016.php>Nike Racer Flyknit 2016</a>

<a href=http://www.aldente-restos.fr/chaussures-saucony-973.php>Chaussures Saucony</a>

http://www.luxavideo.it/405-michael-kors-ava-small.asp

http://www.fulchiron.fr/838-ray-ban-clubmaster-ecaille-pas-cher.html

http://www.vom-eulenloch.de/nike-free-5.0-damen-neu-537.htm

http://www.soulfly-design.de/398-puma-evospeed.php

http://www.eventus-traders.de/391-new-balance-damen-wl574v1-sneakers.html

<a href=http://www.francoismauduit.fr/400-ray-ban-wayfarer-interieur-rouge.php>Ray Ban Wayfarer Interieur Rouge</a>

<a href=http://www.hotel4alle.de/nike-air-max-rosa-pink-694.aspx>Nike Air Max Rosa Pink</a>

<a href=http://www.geo2008.de/fila-turnschuhe-801.php>Fila Turnschuhe</a>

<a href=http://www.nkavmig.se/nike-roshe-nm-flyknit-se-675.php>Nike Roshe Nm Flyknit Se</a>

<a href=http://www.vacu-step.fr/976-michael-kors-hamilton-noir.php>Michael Kors Hamilton Noir</a>

http://www.ardaland.it/748-birkenstock-uomo-boston.asp

http://www.angelocomisso.it/989-nike-magista-obra-2-total-crimson.html

http://www.calzaturificiorenata.it/gucci-scarpe-decolte-806.htm

http://www.clubdelcosto.it/000-giuseppe-zanotti-saldi-milano.asp

http://www.angelocomisso.it/545-nike-mercurial-cr7-2015.html

<a href=http://www.ardaland.it/687-birkenstock-roma.asp>Birkenstock Roma</a>

<a href=http://www.angelocomisso.it/219-nike-tiempo-proximo-tf.html>Nike Tiempo Proximo Tf</a>

<a href=http://www.battagliamontecassino.it/scarpe-manolo-blahnik-mostra-767.html>Scarpe Manolo Blahnik Mostra</a>

<a href=http://www.biellaintraprendere.it/salomon-scarpe-uomo-251.html>Salomon Scarpe Uomo</a>

<a href=http://www.clubdelcosto.it/674-sandali-giuseppe-zanotti.asp>Sandali Giuseppe Zanotti</a>

http://www.ardaland.it/307-birkenstock-scarpe.asp

http://www.anonimoitaliano.it/432-nike-magista-obra-2-fg.htm

http://www.biellaintraprendere.it/salomon-trail-632.html

http://www.al-parco.it/433-nike-mercurial-verde-acqua.html

http://www.clubdelcosto.it/252-valentino-scarpe-uomo-camouflage.asp

<a href=http://www.battagliamontecassino.it/sandali-manolo-blahnik-2017-737.html>Sandali Manolo Blahnik 2017</a>

<a href=http://www.biellaintraprendere.it/salomon-scarpe-invernali-100.html>Salomon Scarpe Invernali</a>

<a href=http://www.al-parco.it/847-nike-magista-verdi.html>Nike Magista Verdi</a>

<a href=http://www.angelocomisso.it/596-nike-superfly-r4.html>Nike Superfly R4</a>

<a href=http://www.al-parco.it/927-nike-magista-rosse-alte.html>Nike Magista Rosse Alte</a>

http://www.amstructures.co.uk/adidas-gazelle-childrens-466.html

http://www.postenblankestijn.nl/334-nike-air-max-90-outfit.htm

http://www.maxicolor.nl/nike-shox-dames-205.html

http://www.evcd.nl/nike-presto-flyknit-black-056.html

http://www.spanish-realestate.es/599-adidas-f50-2010.asp

<a href=http://www.xingbang.es/botas-vibram-gore-tex-634.html>Botas Gore-tex</a>

<a href=http://www.maxicolor.nl/nike-roshe-run-black-376.html>Nike Roshe Run Black</a>

<a href=http://www.cheap-laptop-battery.co.uk/505-adidas-stan-smith-baby.htm>Adidas Stan Smith Baby</a>

<a href=http://www.cazarafashion.nl/groen-nike-777.htm>Groen Nike</a>

<a href=http://www.4chat.nl/asics-gel-lyte-iii-wit-365.html>Asics Gel Lyte Iii Wit</a>

http://www.graysands.co.uk/nike-basketball-shoes-hyperdunk-2016-016.asp

http://www.consumabulbs.co.uk/975-puma-x-graphersrock.html

http://www.kaptur.fr/635-fenty-puma-camel.html

http://www.onegame.fr/new-balance-996-bleu-ciel-160.php

http://www.los-granados-apartment.co.uk/599-adidas-climacool-mens-running-shoes.html

<a href=http://www.graysands.co.uk/nike-free-run-5.0-womens-black-2015-661.asp>Nike Free Run 5.0 Womens Black 2015</a>

<a href=http://www.graysands.co.uk/nike-running-shoes-women-blue-458.asp>Nike Running Shoes Women Blue</a>

<a href=http://www.los-granados-apartment.co.uk/646-stan-smith-primeknit-white-green.html>Stan Smith Primeknit White Green</a>

<a href=http://www.lesfeesbouledeneige.fr/puma-rihanna-femme-963.html>Puma Rihanna Femme</a>

<a href=http://www.los-granados-apartment.co.uk/729-adidas-gazelle-on-feet-women.html>Adidas Gazelle On Feet Women</a>

http://www.maxicolor.nl/nike-2016-heren-zalando-327.html

http://www.cattery-a-naturesgift.nl/oakley-fives-squared-white-774.php

http://www.pcdehoefijzertjes.nl/hermes-riem-heren-kopen-846.php

http://www.taxi-eikhout.nl/646-nike-air-max-2016-roze.htm

http://www.sparkelecvideo.es/950-jordan-nike-neymar.html

<a href=http://www.maxicolor.nl/stefan-janoski-max-wit-667.html>Stefan Janoski Max Wit</a>

<a href=http://www.professionalplan.es/reebok-verdes-399.php>Reebok</a>

<a href=http://www.cattery-a-naturesgift.nl/round-ray-ban-glasses-515.php>Round Ray Ban Glasses</a>

<a href=http://www.spanish-realestate.es/011-botas-de-futbol-adidas-precio.asp>Botas Futbol</a>

<a href=http://www.ehev.es/012-boss-hugo-boss-calzado-zapatillas.htm>Boss Boss</a>

http://www.silo-france.fr/jeremy-scott-moins-cher-520.html

http://www.schatztruhe-assmann.de/air-jordan-eclipse-berlin-918.php

http://www.rebelscots.de/nike-free-5.0-schwarz-45-326.htm

http://www.silo-france.fr/nmd-blue-navy-467.html

http://www.plombier-chauffagiste-argaud.fr/asics-gel-kayano-ocean-pack-536.html

<a href=http://www.plombier-chauffagiste-argaud.fr/asics-bleu-et-orange-479.html>Asics Bleu Et Orange</a>

<a href=http://www.schatztruhe-assmann.de/nike-free-3.0-herren-gelb-857.php>Nike Free 3.0 Herren Gelb</a>

<a href=http://www.scellier-nantes.fr/654-adidas-nmd-r1-femme-beige.html>Adidas Nmd R1 Femme Beige</a>

<a href=http://www.rebelscots.de/nike-air-huarache-weiĂź-496.htm>Nike Air Huarache WeiĂź</a>

<a href=http://www.natydred.fr/762-basket-new-balance-femme-beige.html>Basket New Balance Femme Beige</a>

http://www.spanish-realestate.es/554-puma-football-mexico.asp

http://www.maxicolor.nl/nike-janoski-new-812.html

http://www.decoraciondeinterioresweb.es/oakley-radar-replica-904.php

http://www.academievoorpsychiatrie.nl/739-adidas-zx-700-dames-sale.html

http://www.softwaretutor.co.uk/946-adidas-shoes-yellow-black.htm

<a href=http://www.pcbodelft.nl/315-nike-dames-rood.html>Nike Dames Rood</a>

<a href=http://www.eltotaxi.nl/nike-air-max-bw-kopen-003.php>Nike Air Max Bw Kopen</a>

<a href=http://www.free-nokia-ringtones-now.co.uk/adidas-nmd-white-vintage-094.html>Adidas Nmd White Vintage</a>

<a href=http://www.top40ringtones.nl/adidas-schoenen-spezial-505.htm>Adidas Spezial</a>

<a href=http://www.bures.nl/812-mk-tassen-goedkoop.html>Mk Tassen Goedkoop</a>

http://www.ChaussureAdidasonlineoutlet.fr/670-stan-smith-young.htm

http://www.restaurant-traiteur-creuse.fr/adidas-tubular-original-womens-543.php

http://www.ChaussureAdidasonlineoutlet.fr/895-stan-smith-noir-cuir.htm

http://www.histoiresdinterieur.fr/adidas-ultra-boost-trainers-532.html

http://www.creagraphie.fr/472-adidas-zx-flux-ocean-homme.html

<a href=http://www.histoiresdinterieur.fr/adidas-ultra-boost-rose-gold-303.html>Adidas Ultra Boost Rose Gold</a>

<a href=http://www.estime-moi.fr/adidas-zx-flux-2.0-black-white-883.php>Adidas Zx Flux 2.0 Black White</a>

<a href=http://www.vivalur.fr/712-adidas-boost-endless-energy.php>Adidas Boost Endless Energy</a>

<a href=http://www.estime-moi.fr/adidas-flux-zx-blue-704.php>Adidas Flux Zx Blue</a>

<a href=http://www.leighannelittrell.fr/adidas-neo-easy-vulc-review-562.html>Adidas Neo Easy Vulc Review</a>

http://www.estime-moi.fr/adidas-zx-flux-2.0-amazon-765.php

http://www.leighannelittrell.fr/adidas-neo-sport-station-147.html

http://www.histoiresdinterieur.fr/adidas-ultra-boost-3.0-oreo-release-time-849.html

http://www.creagraphie.fr/730-adidas-zx-flux-all-black-woven.html

http://www.creagraphie.fr/175-adidas-flux-go-sport.html

<a href=http://www.vivalur.fr/439-adidas-ultra-boost-ace-16-red-limit.php>Adidas Ultra Boost Ace 16 Red</a>

<a href=http://www.ChaussureAdidasonlineoutlet.fr/493-adidas-superstar-east-river-rivalry.html>Adidas Superstar East River Rivalry</a>

<a href=http://www.histoiresdinterieur.fr/adidas-boost-ultra-uncaged-490.html>Adidas Boost Ultra Uncaged</a>

<a href=http://www.estime-moi.fr/adidas-zx-flux-fluo-980.php>Adidas Zx Flux Fluo</a>

<a href=http://www.estime-moi.fr/adidas-zx-flux-monochrome-915.php>Adidas Zx Flux Monochrome</a>

http://www.ugtrepsol.es/precio-christian-louboutin-118.php

http://www.cazarafashion.nl/sneakers-nike-wit-338.htm

http://www.theloanarrangers.co.uk/adidas-yeezy-boost-350-price-uk-066.php

http://www.pcbodelft.nl/439-nike-sneakers-camouflage.html

http://www.geadopteerden.nl/voetbalschoenen-dames-goedkoop-911.php

<a href=http://www.professionalplan.es/skechers-mujer-rebajas-942.php>Skechers Rebajas</a>

<a href=http://www.renardlecoq.nl/177-nike-ultra-moire.html>Nike Ultra Moire</a>

<a href=http://www.lexus-tiemens-arnhem.nl/585-skechers-go-walk.htm>Skechers Go Walk</a>

<a href=http://www.desmaeckvanspa.nl/208-balenciaga-dames-shoebaloo.html>Balenciaga Dames Shoebaloo</a>

<a href=http://www.poker-pai-gow.es/826-tenis-under-armour-curry-2.htm>Tenis Armour</a>

http://www.123gouter.fr/adidas-jeremy-scott-pas-cher-supra-894.php

http://www.extreme-hosting.co.uk/181-nike-air-max-95-ultra-womens.php

http://www.aldente-restos.fr/reebok-sneakers-white-705.php

http://www.assurance-csp.fr/asics-2016-gel-lyte-205.htm

http://www.artysols.fr/converse-shoes-2016-743.php

<a href=http://www.fashiondestock.fr/590-chaussure-jeremy-scott-bebe.php>Chaussure Jeremy Scott Bebe</a>

<a href=http://www.aurelieadomicile.fr/064-puma-creepers-rihanna-bleu.php>Puma Creepers Rihanna Bleu</a>

<a href=http://www.dresden2020.de/260-nike-air-force-one-schwarz.php>Nike Air Force One Schwarz</a>

<a href=http://www.campingcarsonway.fr/777-chaussure-adidas-homme-rouge.html>Chaussure Adidas Homme Rouge</a>

<a href=http://www.extreme-hosting.co.uk/991-nike-lebron-11.php>Nike Lebron 11</a>

http://www.sitesm.fr/685-adidas-neo-court-adapt-f39237.php

http://www.sitesm.fr/789-adidas-neo-hoops-grey.php

http://www.leighannelittrell.fr/adidas-neo-piona-allegro-929.html

http://www.creagraphie.fr/620-adidas-zx-flux-femme-noir-et-bronze.html

http://www.ChaussureAdidasonlineoutlet.fr/365-stan-smith-couleur-jaune.htm

[url=http://www.creagraphie.fr/365-adidas-zx-flux-cheetah-buy.html]Adidas Zx Flux Cheetah Buy[/url]

[url=http://www.estime-moi.fr/adidas-flux-noir-homme-574.php]Adidas Flux Noir Homme[/url]

[url=http://www.ChaussureAdidasonlineoutlet.fr/402-superstar-adidas-motif.html]Superstar Adidas Motif[/url]

[url=http://www.climat-concept.fr/adidas-eqt-og-white-688.html]Adidas Eqt Og White[/url]

[url=http://www.estime-moi.fr/adidas-zx-flux-arlequin-prix-612.php]Adidas Zx Flux Arlequin Prix[/url]

http://www.estime-moi.fr/adidas-zx-flux-jd-junior-151.php

http://www.gorrasnewerasnapback.es/gorras-new-york-yankees-452.php

http://www.ChaussureAdidasonlineoutlet.fr/266-adidas-superstar-high.htm

http://www.sitesm.fr/643-adidas-neo-vlneo-hoops-mid-trainers.php

http://www.sitesm.fr/550-adidas-neo-selena-gomez-chaussure.php

[url=http://www.estime-moi.fr/adidas-zx-flux-floral-amazon-259.php]Adidas Zx Flux Floral Amazon[/url]

[url=http://www.leighannelittrell.fr/adidas-neo-womens-city-racer-w-running-shoe-411.html]Adidas Neo Womens City Racer W[/url]

[url=http://www.vivalur.fr/232-adidas-boost-for-ladies.php]Adidas Boost For Ladies[/url]

[url=http://www.ChaussureAdidasonlineoutlet.fr/638-adidas-superstar-bleu-animal.html]Adidas Superstar Bleu Animal[/url]

[url=http://www.ChaussureAdidasonlineoutlet.fr/663-stan-smith-collector.html]Stan Smith Collector[/url]

http://www.xingbang.es/zapatillas-salomon-para-mujer-264.html

http://www.xivcongresoahc.es/oakley-fuel-cell-polarized-307.php

http://www.wervjournaal.nl/073-gucci-schoenen-voor-dames.html

http://www.groenlinks-nh.nl/stan-smith-maat-24-311.html

http://www.geadopteerden.nl/adidas-messi-16-346.php

[url=http://www.manegehopland.nl/louboutins-black-413.php]Louboutins Black[/url]

[url=http://www.harlingen-havenstad.nl/hugo-boss-sneakers-heren-aanbieding-595.html]Hugo Boss Sneakers Heren Aanbieding[/url]

[url=http://www.free-nokia-ringtones-now.co.uk/adidas-los-angeles-vintage-white-amp-raw-purple-577.html]Adidas Los Angeles Vintage White & Raw Purple[/url]

[url=http://www.free-nokia-ringtones-now.co.uk/adidas-nmd-black-women-983.html]Adidas Nmd Black Women[/url]

[url=http://www.demetz.co.uk/adidas-zx-aqua-554.html]Adidas Zx Aqua[/url]

http://www.fashiondestock.fr/216-jeremy-scott-3.0-gold-wings.php

http://www.christelle-barbin.fr/784-converse-one-star-pro.php

http://www.christelle-barbin.fr/186-converse-all-star-dainty-canvas-ox-w.php

http://www.campingcarsonway.fr/583-adidas-noir-et-rose.html

http://www.assurance-csp.fr/asics-gel-lyte-3-argenté-410.htm

[url=http://www.123gouter.fr/adidas-gazelle-vintage-suede-pack-757.php]Adidas Gazelle Vintage Suede Pack[/url]

[url=http://www.123gouter.fr/adidas-neo-rose-fille-423.php]Adidas Neo Rose Fille[/url]

[url=http://www.123gouter.fr/jeremy-scott-wings-batman-863.php]Jeremy Scott Wings Batman[/url]

[url=http://www.artysols.fr/converse-all-star-noir-basse-068.php]Converse All Star Noir Basse[/url]

[url=http://www.fashiondestock.fr/438-nmd-noir-et-rouge.php]Nmd Noir Et Rouge[/url]

http://www.veilinghuiscoins-art.nl/adidas-sportschoenen-rood-588.html

http://www.thehappydays.nl/048-nike-md-runner-2-zwart-dames.php

http://www.amstructures.co.uk/adidas-neo-grey-and-blue-321.html

http://www.amorenomk.es/701-camisas-polo-para-niños.html

http://www.xivcongresoahc.es/ray-ban-redondas-sol-369.php

[url=http://www.vianed.nl/628-valentino-sneakers-outlet.html]Valentino Sneakers Outlet[/url]

[url=http://www.quantex.es/bolsos-louis-vuitton-242.php]Bolsos Vuitton[/url]

[url=http://www.professionalplan.es/tiendas-de-zapatillas-mbt-en-madrid-755.php]Tiendas Zapatillas[/url]

[url=http://www.professionalplan.es/reebok-modelos-clasicos-316.php]Reebok Clasicos[/url]

[url=http://www.mujerinnovadora.es/307-zapatos-verdes-tacon-bajo.asp]Zapatos Tacon[/url]

http://www.fiets4daagsekempenland.nl/390-puma-fenty-shoes.php

http://www.renardlecoq.nl/396-nike-schoenen-dames-groningen.html

http://www.wallbank-lfc.co.uk/745-adidas-ultra-boost-white-and-blue.htm

http://www.ehev.es/010-caterpillar-botas-2017.htm

http://www.auto-mobile.es/158-nike-mercurial-superfly-2015.php

[url=http://www.rechtswinkelalkmaar.nl/puma-creeper-velvet-zwart-027.html]Puma Creeper Velvet Zwart[/url]

[url=http://www.posicionamientotiendas.com.es/628-tenis-jordan-retro-3.html]Tenis Jordan Retro 3[/url]

[url=http://www.softwaretutor.co.uk/393-adidas-ultra-boost-solar-red-gradient.htm]Adidas Ultra Boost Solar Red Gradient[/url]

[url=http://www.evcd.nl/nike-air-force-multicolor-669.html]Nike Air Force Multicolor[/url]

[url=http://www.auto-mobile.es/603-puma-soccer-shoes.php]Puma Shoes[/url]

http://www.free-nokia-ringtones-now.co.uk/adidas-nmd-womens-green-546.html

http://www.rwpieters.nl/187-reebok-freestyle.html

http://www.lexus-tiemens-arnhem.nl/254-dior-shoes-2017.htm

http://www.desmaeckvanspa.nl/260-balenciaga-arena-high-heren.html

http://www.pcbodelft.nl/237-nike-cortez-brons.html

[url=http://www.cambiaexpress.es/gucci-zapatos-de-hombre-938.php]Gucci De[/url]

[url=http://www.auto-mobile.es/786-nike-mercurial-vapor-x-fg-cr7.php]Nike Vapor[/url]

[url=http://www.xingbang.es/skechers-go-walk-3-precio-430.html]Skechers Walk[/url]

[url=http://www.poker-pai-gow.es/956-skechers-diameter-blake-63385.htm]Skechers Blake[/url]

[url=http://www.active-health.nl/mizuno-wave-rider-19-535.htm]Mizuno Wave Rider 19[/url]

http://www.ehev.es/912-zapatos-dolce-gabbana-outlet.htm

http://www.poker-pai-gow.es/160-reebok-de-mujer-2016.htm

http://www.3500gt.nl/772-nike-hypervenom-2-neymar.php

http://www.evcd.nl/jordans-black-red-344.html

http://www.posicionamientotiendas.com.es/102-zapatillas-jordan-super-fly.html

[url=http://www.taxi-eikhout.nl/882-nike-air-max-90-cool-grey.htm]Nike Air Max 90 Cool Grey[/url]

[url=http://www.ruudschulten.nl/324-nike-huarache-ultra-wit.html]Nike Huarache Ultra Wit[/url]

[url=http://www.wervjournaal.nl/825-lacoste-schoenen-spartoo.html]Lacoste Schoenen Spartoo[/url]

[url=http://www.sarbot-team.es/695-camisetas-lacoste-baratas-chile.php]Camisetas Baratas[/url]

[url=http://www.carpetsmiltonkeynes.co.uk/252-adidas-nmd-xr1-duck-camo.html]Adidas Nmd Xr1 Duck Camo[/url]

http://www.la-baston.fr/adidas-zx-flux-bleu-marine-et-rose-164.html

http://www.lesfeesbouledeneige.fr/chaussure-puma-disc-blaze-219.html

http://www.lesfeesbouledeneige.fr/puma-basket-lacet-972.html

http://www.onegame.fr/new-balance-u420-femme-pas-cher-157.php

http://www.vansskooldskool.dk/sorte-vans-til-kvinder-902.php

[url=http://www.la-baston.fr/adidas-chaussure-gel-556.html]Adidas Chaussure Gel[/url]

[url=http://www.vansskooldskool.dk/vans-old-skool-high-268.php]Vans Old Skool High[/url]

[url=http://www.imprimerieexpress.fr/chaussure-puma-pas-cher-femme-785.php]Chaussure Puma Pas Cher Femme[/url]

[url=http://www.ileauxtresors.fr/yeezy-boost-350-camel-171.htm]Yeezy Boost 350 Camel[/url]

[url=http://www.lesfeesbouledeneige.fr/puma-platform-bleu-984.html]Puma Platform Bleu[/url]

http://www.probaiedumontsaintmichel.fr/325-new-balance-femme-574-noir-et-rose.php

http://www.viherio.fr/280-adidas-bebe-scratch.php

http://www.schatztruhe-assmann.de/nike-air-max-hyperfuse-neon-pink-642.php

http://www.viherio.fr/111-adidas-ultra-boost-homme.php

http://www.silo-france.fr/adidas-wings-prix-632.html

[url=http://www.weddingtiarasuk.co.uk/adidas-ultra-boost-3.0-734.php]Adidas Ultra Boost 3.0[/url]

[url=http://www.vansskooldskool.dk/vans-sko-læder-025.php]Vans Sko Læder[/url]

[url=http://www.weddingtiarasuk.co.uk/adidas-d-rose-7-x-sns-598.php]Adidas D Rose 7 X Sns[/url]

[url=http://www.rebelscots.de/nike-free-3.0-tĂĽrkis-damen-685.htm]Nike Free 3.0 TĂĽrkis Damen[/url]

[url=http://www.scellier-nantes.fr/246-adidas-jeremy-scott-bear.html]Adidas Jeremy Scott Bear[/url]

http://www.graysands.co.uk/nike-air-presto-on-feet-971.asp

http://www.ileauxtresors.fr/adidas-femme-tubular-377.htm

http://www.graysands.co.uk/nike-air-max-2015-black-and-blue-904.asp

http://www.los-granados-apartment.co.uk/928-adidas-shoes-stan-smith-pink.html

http://www.la-baston.fr/yeezy-rouge-adidas-900.html

[url=http://www.la-baston.fr/adidas-chaussure-2016-fille-148.html]Adidas Chaussure 2016 Fille[/url]

[url=http://www.ideelle.fr/725-asics-kayano-evo-black.html]Asics Kayano Evo Black[/url]

[url=http://www.la-baston.fr/ultra-boost-white-grey-134.html]Ultra Boost White Grey[/url]

[url=http://www.graysands.co.uk/nike-air-max-2015-mens-854.asp]Nike Air Max 2015 Mens[/url]

[url=http://www.lesfeesbouledeneige.fr/puma-suede-rose-clair-658.html]Puma Suede Rose Clair[/url]

http://www.poker-pai-gow.es/502-zapatos-mbt-catalogo.htm

http://www.pcc-bv.nl/nike-air-max-2016-bestellen-achteraf-betalen-261.htm

http://www.fiets4daagsekempenland.nl/482-puma-suede-classic-heren.php

http://www.juegosa.es/972-zapatillas-yves-saint-laurent.html

http://www.xingbang.es/timberland-botas-hombre-908.html

[url=http://www.demetz.co.uk/adidas-flux-tan-208.html]Adidas Flux Tan[/url]

[url=http://www.lexus-tiemens-arnhem.nl/048-balenciaga-runners-high-top.htm]Balenciaga Runners High Top[/url]

[url=http://www.tinget.es/adidas-x-16.3-2017-640.html]Adidas 16.3[/url]

[url=http://www.pcbodelft.nl/216-nike-schoenen-dames-zilver.html]Nike Schoenen Dames Zilver[/url]

[url=http://www.renardlecoq.nl/171-waar-kan-ik-nike-shox-kopen.html]Waar Kan Ik Nike Shox Kopen[/url]

http://www.free-nokia-ringtones-now.co.uk/adidas-neo-white-sneaker-542.html

http://www.tr-online.nl/825-adidas-zx-flux-zwart-roze.php

http://www.aoriginal.co.uk/adidas-superstar-high-tumblr-269.html

http://www.cdvera.es/001-tenis-mizuno.htm

http://www.desmaeckvanspa.nl/380-philipp-plein-schoen-sale.html

[url=http://www.evcd.nl/nike-pegasus-32-grijs-724.html]Nike Pegasus 32 Grijs[/url]

[url=http://www.professionalplan.es/zapatillas-fila-nuevos-modelos-665.php]Zapatillas Nuevos[/url]

[url=http://www.herbusinessuk.co.uk/387-adidas-superstar-navy-blue-suede.htm]Adidas Superstar Navy Blue Suede[/url]

[url=http://www.specialgroup.nl/125-adidas-superstar-rt-blue.htm]Adidas Rt[/url]

[url=http://www.xingbang.es/vans-nintendo-161.html]Vans[/url]

http://www.estime-moi.fr/adidas-zx-flux-bordeaux-courir-358.php

http://www.estime-moi.fr/adidas-zx-flux-black-womens-559.php

http://www.attitudesinde.fr/193-adidas-eqt-support-primeknit-white.php

http://www.vivalur.fr/691-adidas-ultra-boost-2015-test.php

http://www.creagraphie.fr/333-adidas-zx-flux-multicolor-size-14.html

[url=http://www.ChaussureAdidasonlineoutlet.fr/611-superstar-adidas-original.html]Superstar Adidas Original[/url]

[url=http://www.vivalur.fr/329-adidas-boost-3-oreo.php]Adidas Boost 3 Oreo[/url]

[url=http://www.climat-concept.fr/solebox-x-adidas-eqt-guidance-93-consortium-049.html]Solebox X Adidas Eqt Guidance 93[/url]

[url=http://www.histoiresdinterieur.fr/adidas-boost-2-red-648.html]Adidas Boost 2 Red[/url]

[url=http://www.vivalur.fr/466-adidas-boost-750-low.php]Adidas Boost 750 Low[/url]

http://www.leighannelittrell.fr/adidas-neo-v-racer-pas-cher-540.html

http://www.adidasschuheneu.de/688-adidas-sneaker-rot-blau.htm

http://www.adidasschuheneu.de/643-adidas-stan-smith-ray-pink.htm